! THIS BLOG IS OUT OF DATE. For a more recent analysis see this blog !

Dear MDPI,

Your journal publications have grown dramatically, and quite extraordinarily. But there are sceptics who suggest that this reflects low standards and distorting financial incentives. I was one of them. To prove my views I explored trends in publications of 140 journals for which data were available from 2015 onwards. But doing so proved me wrong; I could not sustain my sceptical view. Instead I think I have a better understanding of why researchers are so keen to publish with you. But my exploration also makes plain challenges that you will face in the future, that are caused by your remarkable success. In this letter I describe your growth, the lack of substance to sceptics’ criticism and the challenges which your success creates. I also describe potential solutions to them. Here is the word version of it.

- The Remarkable Growth of MDPI

MDPI’s growth is phenomenal. In barely ten years it has transformed from a small bespoke publisher managing a few journals to a major player publishing over 200 journals and tens of thousands of papers (Figure 1A). Moreover this growth is not due to new titles; it is driven by the popularity of its older journals (Figure 1B). 2018 in particular was a remarkable year for the company in which a major rise in submissions across almost the entire portfolio drove dramatic increases in the number of papers published. That fact is most plainly seen in Figure 1C. This shows that in 2018 the vast majority of the journals saw submissions increase by at least 50%, sometimes much more, resulting in thousands of extra MSes.

This growth reflects a number of unusual features. Processing times are short – in 2018 median time from submission to publication was only 39 days (!) The journal is entirely open access with all costs paid for by Author Processing Charges (APC). APC range from 350 and 2000 CHF. The median value charged per journal is 1000 CHF. The more popular journals charge more, and most published papers in 2018 paid 1700-2000 CHF. But these rates still make the journals both relatively cheap to publish in (for gold standard open access). Furthermore as open access journals the finished products are easily accessible and citable. Growth has been facilitated by the large number of special issues each journal promotes. These are curated by guest editors. It easier to identify speedy reviewers of special issues as they leverage the networks of the guest editors. The journals also have (I am told) an excellent journal management system that is easy to work with. Finally, MDPI are also more transparent compared to other publishers. Their website is easily navigable and presents useful material about all its journals, such as rejection rates, which others do not make so easily available.

These features suggest that MDPI has become an increasingly attractive venue for researchers to publish because of the quality of service it offers. This service means that increasingly large numbers try to place their work in its journals. It publishes more papers because more and more people are sending in papers to review.

But it is also possible that the growth in papers reflects low standards. In some academic quarters there is scepticism as to the quality of MDPI journals. The speed of review and the abundance of papers makes it harder to maintain the highest academic standards. Good science can be slow. Hundreds of special issues, each with their own editors, will make it keep consistent standards across such diversity. Similarly the variety of topics covered within individual journals also makes overseeing the same high standards of review across so many different subjects harder. The more popular journals also have hundreds of editorial board members. Again this raises problems of consistency. Finally a sceptic might also suggest that the APC for published papers introduces an incentive to publish weaker papers. Because any paper, however poor, brings in revenue. I myself held those views before writing this document.

We can examine the sceptic’s case by considering how rejection rates have influenced the growth in publications and by exploring changes in revenue across the portfolio. I present these data below. Methods are described at the end of this document.

Figure 1- The growth of MDPI journals, papers and submissions (click here to see a pdf version)

- Growth is not associated with lowering publication standards

On average MDPI journals are relatively generous with acceptance rates across the portfolio of older journals of just under 50%. However their more popular journals tend to have higher rejection rates. Rates have not changed significantly over the previous four years of growth (Figure 2A). These averages conceal a high range of rejection rates from below 10 to over 80. These are shown in Figure 2B, which show annual rejection rates for each journal against total submissions for that journal in each year.

More important than the headline rejection rates is what happens to these rates as submissions increase. When journals become more popular they have two choices. They can get bigger and publish more papers. Or they can raise their standards and increase their rejection rates. The former increases revenues from APC, the latter can raise reputations and standards. High rejection rates in good journals are generally over 80% with the best journals enjoying rates of over 90 or even 95%.

MDPI journals have generally not sought to become more exclusive as they have become popular. Few journals have rejection rates over 70%. At the same time they have not grown the larger journals by lowering standards and decreasing rejection rates. The most popular journals tend to have acceptance rates which have remained stable and between 50 and 70% even as interest in these journals has mushroomed (Figure 2C & D).

There is a tendency for the smaller journals to have lower rejection rates and for those rejection rates to have declined from 2015-2018 (Table 1). However this is a tendency not a rule. Some smaller journals have high rejection rates which have become more stringent with time (second panels of Figure 2C & D). Editors clearly have freedom to respond as they wish to increasing interest in their journals.

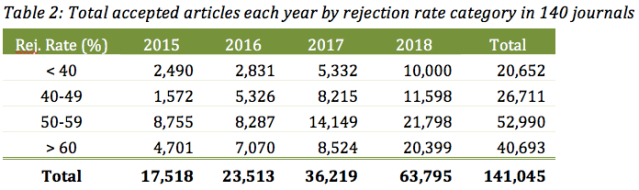

There is no evidence therefore to support the sceptic’s view that MDPI journals have grown because they have become easier to publish in. The journals with higher rejection rates publish more articles than those with lower rejection rates (Table 2). MDPI journals have become substantially more popular without lowering rejection rates of their largest journals.

- Increased popularity brings in more money, but pursuit of APC does not drive publications.

The growth of interest in MDPI journals has presented a considerable commercial opportunity, and the company has made good use of it. The company structures APC such that the more popular journals are more expensive to publish in (Table 3).

As a result of increasing demand, APC have risen across the portfolio (Table 4) to some consternation in the open access community. When a journal is younger and smaller it is cheaper to publish in. But when it receives more interest, the price rises.

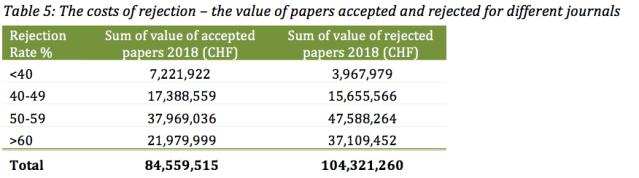

The value of APC and their importance to the business model of the company raises the prospect that it may be tempting to use journals as a means of raising revenue by accepting dubious papers. Papers may be accepted because of the APC they will generate rather than their scientific merit.

This is not the case. Journals with the lowest rejection rates raise the lowest revenues (Table 5). The value of rejected papers substantially exceeds the revenues of published papers. The commercial benefits of low rejection rates are negligible compared to the returns from journals which are harder to get into. The more exclusive journals are more lucrative for the publishers.

There is no evidence therefore to support the sceptic’s view that MDPI publishes more because it has a financial incentive to do so. Revenues have grown because charges change as researcher interest grows, not because low standards let in too many papers.

4. Scope, Significance and the Place of MDPI in the Publishing Ecosystem

At first sight is difficult for a sceptic to understand how such an increase in the quantity of publications cannot be associated with low standards. Where could all these publication standard articles have come from so quickly? For sceptics the speed of the review process, the diversity of topics covered and the plethora of special issues and editors must mean that quality is not always be maintained. In 2018, for example, 14 journals I have examined were accepting over 70% of all submissions, leading to just under 2,400 published papers.

But these low rejection rates are relatively uncommon. We cannot explain the growth of MDPI simply be claiming undue haste in the publication process. Another possible answer is that as the journal reputations have grown so they have attracted more interest from better researchers who are keen to get their work into these growing new, open access journals. The guest-editing-special-collection model that MDPI use to bring in content can easily be scaled up. This makes it possible to keep reviewing times short, and make it easier to locate willing reviewers. The journals benefit from the free labour and networks that special issue editors are so keen to provide. In this scenario MDPI is just capturing a greater market share of the publishable research articles. This interpretation has added forced because 63 professional scientific associations had partnered with or affiliated to MDPI journals by the end of 2018.

Another indication of quality – although I have not assembled the data here – is that MDPI journal citation rates are rising, and they are increasingly being listed on the large journal and citation databases. More scientists want to talk about the work that MDPI journals are producing. In 2018 117 journals were covered by the Web of Science Core collection, 54 by SCIE and 111 by Scopus.

The sceptic’s view of MDPI is just not supported by the data I have analysed. Indeed the stance is obtuse because it will not help us to understand the nature of the service offered by these journals or their appeal to the researcher community. MDPI journals have grown because submissions to them have grown. Therefore we have to understand why they have become more popular.

It may be helpful to conceptualise the publication choices of academics as a market place – offering various degrees of accessibility, reputation, prestige and ease of publication. MDPI journals have generally not used the increased submissions they have enjoyed to make themselves really hard to get into. After all, some of the better journals pride themselves on rejection rates of over 90%. This is not MDPI territory. They will not become the most prestigious journals in academia while rejection rates are so generally low.

There is a risk that the high rates and speed of the process increases the risks of journals making mistakes, and thus bringing down the value of publishing in them. But the risk of being associated with mistakes is deterring few people. The rising level of submissions clearly demonstrates that any doubts about quality do not inhibit many researchers from submitting their work to these journals. There is clearly an appetite for journals which are relatively easy to get into, and then more accessible to more people to read. That is why it is a mistake to see the growth and popularity of MDPI journals as a sign of poor quality. This view simply fails to recognise the value that other researchers find in the services MDPI offers.

It is better instead to recognise that MDPI may herald a new approach and philosophy to academic publishing. MDPI journals are not published physically; they only appear in electronic form. When journals were printed space was at a premium – it cost more to publish longer papers. The best papers were those which made significant scientific advances, but by using minimal words, diagrams and space. Progress was concise.

However, without space constraints it is possible for editors to argue that if something is true and valid then it deserves to be published. The electronic-only format means that space is never an issue. They never have to worry about less important papers displacing more important papers. Instead there is room for everyone.

Another way of putting this is that the scope and significance of scientific advances individual papers make need not be criteria for publication. I once wrote a paper that reviewed conservation spending by conservation NGOs across all of sub-Saharan Africa over a three year period, and compared its distribution to conservation priorities. This work was completely original, no one had ever tried to do anything like it before. It could have substantially improved our understanding of where conservation effort was directed. Yet the journal I sent it to rejected it within 24 hours because it did not have adequate ‘scope’. Important as I thought my work to be, there were more important things for time-pressed scientists to be reading.

This approach to scope and significance is most transparently clear in the ethos of a new multidisciplinary journal ‘J’ that MDPI launched in 2018. I have reproduced the full text describing the journal and its hopes below (Box 1). The important text is highlighted in bold. It states that reviewers will not be asked to consider the significance of contributions, merely their validity. The larger community of readers will determine how much any particular findings matter. Scientific progress has no longer to be defined by its combination of insight and concision. The most long-winded and minor advances can still be published. Scientific progress can be made if a paper merely adds insight, without any consideration of space, concision or efficiency.

Box 1: J — Multidisciplinary Scientific Journal (emphasis added)

| J (ISSN 2571-8800) is an international, peer-reviewed, multidisciplinary, open access journal, which provides an advanced forum for high-quality original research across the entire range of natural, applied, social, and formal sciences. J is dedicated to publishing all types of research outputs, including negative and confirmatory results in all disciplines and to make these results available to the relevant scientific communities shortly after peer-review. Our goal is to improve fast dissemination of new research results and ideas, and to allow research groups to build new studies, innovations and knowledge without delay . . . . Reviewers will be asked to evaluate only the soundness of the research approach, to detect the presence of any major flaws, and to make a recommendation regarding publication. They can choose between acceptance, minor revision, major revision or rejection, but only evaluating the validity of the scientific content. The significance of the contributions will be assessed by the appropriate scientific community at large, not by the two reviewers or the academic editor. We encourage scientists to publish their experimental and theoretical results in as much detail as possible so there is no restriction on the length of the papers. |

The arrival of J means that there is now an outlet that can publish any sort of work that derives from any combination of disciplines, at any length, about any subject at all. If it takes off, J could become a rather large and all-inclusive journal. It is possible that its rejection rates could be low. Or put differently, rejection rates are almost irrelevant as a measure of success. They are more likely to measure the clarity of the journal’s instructions to authors. Success would be measured post-hoc, by the authority and use that publications acquire.

- Remaining Challenges for MDPI journals

The reputation of MDPI journals is bound to grow. The mud that sceptics throw will not stick. After all, I have tried harder than most (just try recreating the figures and tables I made above) and, as a result, have failed all the more spectacularly. Occasional mistakes notwithstanding, these journals will be publishing too much interesting science for their content to be dismissed. Anyway there are ample cases of much more stringent journals publishing mistakes, and/or rejecting content that later formed the basis of Nobel prizes. MDPI is no different from the rest of the field.

The same logic applies commercially. With current rejection rates as they are, the MDPI model is relatively inured from mistakes and damage to the brand. It is still possible for mistakes to be made. But journals that are meant to be the best still drop absolute clangers, or miss amazing work. For MDPI a poor paper, published inappropriately, makes no difference to income. No one will unsubscribe from a free journal. The revenue from it is already earned and the deficiencies of any individual article are diluted by the sheer volume of other material. Bad work is buried in the mountain of other publications.

A died-in-the-wool sceptic may suggest that lowered standards, or the perceived risk of lowered standards, may provoke an institutional responses from Universities. If promotion or appointment committees are unimpressed by MDPI publications in a CV then this will dampen some of the enthusiasm. But it is difficult to see how this could make any dent in the vast number of publications which MDPI journals receive. Many academics are enthusiastic publishers. They will try for both more exclusive publications and for papers which are more accessible and can reach larger audiences. The trend is for journals to proliferate. Very high rejection rates mean, by their very nature, that most academics do not succeed in reaching those journals most of the time. They have to publish somewhere and MDPI journals provide a good outlet to do so.

There are, however, two central challenges that will become increasingly apparent, especially now that MDPI journals have become more popular and more mainstream. These are

- Creating ever more content, in which scope and significance are not counted, and paper length is not controlled, creates unsupportable reading demands on researchers. MDPI may be able to publish more and more work. But we will not be able to read it.

- The increased financial returns creates increasing expectations to give back to the researchers producing these papers.

Each challenge presents obvious solutions. The response to the first is to create more exclusive journals. MDPI’s reputation is of a generalist publisher with relatively low rejection rates and as a relatively easy place to get material out quickly. It could create more exclusive outlets which are cheaper to publish in (and hence more popular with respect to submissions) but where rejection rates are much higher. This would mirror the trend for more exclusive brands (Nature) to establish less exclusive versions of an exclusive brand.

It is easy to image a system whereby authors, or editors, who thought submissions had the scope and significance to merit broader readership could pay a small supplemental fee (50 CHF) for their MS to be considered in the more exclusive journals. Papers which were accepted would be waived all APC. This arrangement would be likely to result in a much more exclusive journal, full of more significant papers and with likely high rejection rates.

So, MDPI, why not set up a ‘flagship’ journal (you could even call it that) in which the best papers of your stable are co-published in the flagship. This would be a more prestigious journal than your others, and particularly appealing because it would be free to publish in.

Another means of helping researchers to cope with excessive content is to produce bespoke review papers. The gold standard are provided by the Cochrane reviews, which consider both published and unpublished work, recognising that important negative results may not be publishable by normal standards. However MDPI changes the standards, it publishes negative and confirmatory results. But this makes it more important to produce reviews and digests which summarise ever more voluminous material.

The response to the second is to ‘give back’ more. The headline estimated figures of MDPI’s growth in recent years are rather eye-watering (Figure 1). Few commercial operations are able to demonstrate those levels of growth. They are a testimony to remarkable success.

These figures are also only estimates. They do not include 51 more recently created (and smaller) journals. But they also assume that all APC are paid in full. This is not the case. APC are often waived or reduced. A former CEO of MDPI recently stated that 20% of APC are not paid (see the comments on this thread). Good articles, editors, reviewers and members of affiliated professional societies all receive APC rebates. Moreover these are only estimated gross, not net, revenues. MDPI has also had to establish extensive physical and commercial infrastructure in order to service its journals. It’s website and journal management system are complemented by three offices (in Switzerland, China and Spain) and, as of 2018, 1248 employees. Nevertheless the success of the model, and the ability of these journals to scale up publications without adding overheads and costs means that this is a system which is geared towards profitability.

Figure 3: Estimated Gross Revenues – APC of accepted papers 2015-2018 from 140 journals.

Publishers generally suffer from popular perceptions that they are exploitative and free-ride on academics labour. They do free-ride, but can counter-act this by supporting more academic activities. Many of the activities publishers support (workshops, conferences, awards, sponsorship to attend conferences etc) then tend to generate more content for their journals.

MDPI is active in this space. To give just one example the APC rebates it offers to reviewers, could, theoretically, have reached 28 million CHF from just the 140 journals I have examined. But MDPI is not as transparent about its giving as it could be. Given that its transparency on other aspects of its activity already raises the bar for other publishers it could be similarly forthcoming with respect to its sponsorship of academic activity. That could drive welcome change across the sector.

Another possibility would be capping or reducing the APC charged for the most popular journals. A similar effect could be achieved by rewarding authors with rebates on the APC on future submissions. The size of these rebates could be linked to the journal’s performance in the year of publication – and thus authors share in the success that their writing helps to create.

Again, MDPI, there are concrete suggestions here to which I would like you to respond. Please could you publish what APC revenues you earned from each journal in each year – and what APC subsidy you have given out to authors and reviewers. Please make clear what other forms of support you are giving. I think if you lead on this then other publishers will have to follow. And, while you are at it, please could you make your data downloadable – it took me a while to copy them all out. Finally, please offer authors an APC rebate on future submissions that is linked to the success of the journal in which they have published – share the love!

- Methods and Acknowledgements

I copied data for submissions and rejection rates for 140 journals from the MDPI website that began business on or before 2015 and which handled more than 10 papers in that year. I assumed that rejection rates in a given year applied to all papers submitted in that year. Rounding of some of the rejection rates means that minor discrepancies appear in the accepted papers in this spreadsheet and the published data.

I took APC charges from the website but have had to estimate likely charges in some instances where APC for early years were unavailable. In 2018 and 2019 APC changed every 6 months. I have therefore calculated average APC for the year and applied that average to all papers published in that year. This is inaccurate as the lower fees apply to papers published in the first half the year, and higher fees in the second half. Nevertheless it provides a satisfactory short hand in the absence of better data.

The raw data I have used are available here.

My thanks to Clive Phillips for constructive engagement, key insights and critical commentary throughout.

Your Faithfully,

Dan Brockington,

Glossop, UK,

December 2019

We would like to thank Dan Brockington for such an interesting analysis. We find his analysis is broadly accurate, and it is true that we have been growing by 50%–70% over the last few years. We also analyze the reasons for our success internally, because we are often surprised by this achievement ourselves. Among the reasons we have been growing so fast are: 1. We are mainly focused on providing the best service to the authors (we publish fast, we are very responsive and flexible, and we conduct proper peer-review as reflected by Publons or QOAM). 2. We offer strong administrative support to the Academic Editors, which gives them convenience but also helps us being fast. 2. We care about each and every journal we publish irrespective of the revenue we receive (see examples Arts which published 160+ articles this year entirely free of charge, or Religions, which published 600+ with an almost 90% waiver rate). MDPI publishes around 20 journals in the social sciences and humanities fields, most of them with very high waiver rates. We do it because we are fully committed to achieve the transition to the open access model in all fields. 3. We reinvest in hiring enough people to handle the increasing number of submissions, in order to keep a high levels of services, and we also invest in developing platforms to improve the experience of scholars (e.g. Preprints, Scilit, Sci, SciProfiles, Encyclopedia).

We have been preparing and will soon share figures about the income and a breakdown of APC pricing. We will make it available on our website to meet the requirements of Plan S. A lot of the income from the popular journals, also charging a higher APC, is used to subsidize the young journals and the journals in poorly funded fields. Across all MDPI journals we waive 25%–30% of the content we publish. We use the profit or surplus to hire editors (a journal like Journal of Clinical Medicine saw a 10 times increase in submissions after receiving its first impact factor and we had to hire a high number of editors to handle these papers in a timely manner). We also need to cover the costs of rejected papers, which amount to 60% of submissions of which 23%–25% are rejected after peer-review (this year alone we have received over 240,000 submissions).

Regarding the benefits we offer to authors and reviewers, as you can see we offer many waivers and discounts. Some are directly offered by the Editorial offices, but we also offer discounts through an institutional open access program for universities and research organisations, and collaborations with societies. Reviewers can use discount vouchers they receive to reduce the APC when they submit their own papers. We have considered paying the reviewers, but the feedback from the scholars themselves was negative, as they saw this as bringing bias into scientific assessment. They prefer the vouchers. This year we have also offered travel grants as well as many other types of awards for scholars amounting to over 130,000 Swiss francs.

We are aware there are many aspects where we can still improve our service and we welcome constructive feedback.

Thank you,

Delia Mihaila

Chief Executive Officer

MDPI

Email: mihaila@mdpi.com

The sudden rise of the cheaper or free MDPI journals to US$950 APC effective from June 2019 was a major change, and may reflect the company’s growing confidence in the academic world. Previously, a lot of their social science and humanities journals were much cheaper than this . This price will push many authors out of the market, unable to afford this sort of cost, but it looks like from Dan’s analysis that the company will not care, since their income is large. They now share more similarities with the larger for-profit publishers than with the not-for profit journal sector. I took them off my list.

Pingback: No existe una definición en blanco y negro de publicación predatoria | Universo Abierto

Pingback: MDPI Journals – 2015 to 2019 | Dan Brockington

Pingback: Guest Post – MDPI's Remarkable Growth - The Scholarly Kitchen

Have you considered looking at the Frontiers journals in a similar manner?

Hi Dorothee, thanks for looking at this. This has been mentioned before. I’m not sure I could take it on. Keeping up with MDPI keeps me busy. But I also suspect that your comment singles out two publishing houses (Frontiers and MDPI) when they need to be considered as part of the broader ecosystem. After all, it’s not as if Elsevier solves any problems that MDPI may present. I’d love to collaborate with researchers working on the other academic presses.

Pingback: Is MDPI a predatory publisher? – Paolo Crosetto

Pingback: MDPI Journals: 2015-2020 | Dan Brockington

Pingback: MDPI Journals: 2015 -2021 | Dan Brockington